CPU Cache Coherence in Java Concurrency

Jakob Jenkov |

In some of the other tutorials in this Java Concurrency tutorial series you might have read, or heard me saying in a video, that when a Java thread writes to a volatile variable, or exits a synchronized block - that this flushes all variables visible to the thread from the CPU cache to main memory.

This is not actually what happens. In this short tutorial I will explain what really happens. I have made a video

version of this tutorial too, if you prefer video:

Java Concurrency + CPU Cache Coherence

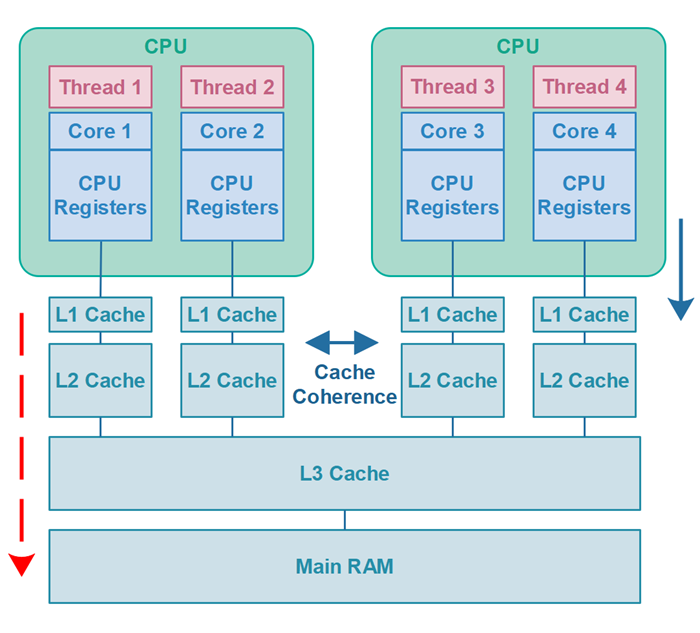

What actually happens is, that all variables visible to the thread which are stored in CPU registers will be flushed to main RAM (main memory). On the way to main RAM the variables may be stored in the CPU cache. The CPU / motherboard then uses its cache coherence methods to make sure that all other CPUs caches can see the variables in the first CPUs cache.

The hardware may even choose not to flush the variables all the way to main memory but only keep it in the CPU cache - until the CPU cache storing the variables is needed for other data. At that time the CPU cache can then be flushed to main memory. However, for the code running on the CPU this is not visible. As long as it gets the data it requests from any given memory address, it doesn't matter if the returned data only exists in the CPU cache, or whether it is also exists in main RAM.

You don't have to worry about how CPU cache coherence works. There is of course a little performance hit for this CPU cache coherence, but it's better than writing the variables all the way down to main RAM and back up the other CPU caches.

Below is a diagram illustrating what I said above. The red, dashed arrow on the left represents my false statement from other tutorials - that variables were flushed from CPU cache to main RAM. The arrow on the right represents what actually happens - that variables are flushed from CPU registers to the CPU cache.

| Tweet | |

Jakob Jenkov | |